This page has been machine-translated from the original page.

Inspired by Introduction to OS Code Reading ~Understanding the Kernel Mechanisms with UNIX V6, I’m reading xv6 OS.

Since UNIX V6 itself does not run on x86 CPUs, I’ve decided to read the source code of kash1064/xv6-public: xv6 OS, which is a fork of the xv6 OS repository that has adapted UNIXv6 to run on the X86 architecture.

Previously, we read through the build and startup process of xv6 OS.

Now we’re going to proceed with reading the kernel.

First, let’s start reading from main.c.

Basically, we’ll read the C source code and trace the processing flow by performing gdb debugging while referencing kernel.asm and kernel.sym as needed.

Table of Contents

- main function

- kinit2 function

- Bonus: Calling the panic function with the debugger

- Bonus: About memset

- Summary

- References

main function

In the previous article, we saw up to calling the main function in main.c.

From here, we’ll look at the behavior of the kernel itself.

First, I’ve extracted only the lines of the main function from main.c.

static void startothers(void);

static void mpmain(void) __attribute__((noreturn));

extern pde_t *kpgdir;

extern char end[]; // first address after kernel loaded from ELF file

// Bootstrap processor starts running C code here.

// Allocate a real stack and switch to it, first

// doing some setup required for memory allocator to work.

int

main(void)

{

kinit1(end, P2V(4*1024*1024)); // phys page allocator

kvmalloc(); // kernel page table

mpinit(); // detect other processors

lapicinit(); // interrupt controller

seginit(); // segment descriptors

picinit(); // disable pic

ioapicinit(); // another interrupt controller

consoleinit(); // console hardware

uartinit(); // serial port

pinit(); // process table

tvinit(); // trap vectors

binit(); // buffer cache

fileinit(); // file table

ideinit(); // disk

startothers(); // start other processors

kinit2(P2V(4*1024*1024), P2V(PHYSTOP)); // must come after startothers()

userinit(); // first user process

mpmain(); // finish this processor's setup

}Functions are lined up and executed in order from top to bottom.

In this article, we’ll look at the kinit1 and kinit2 functions.

kinit1 function

The kinit1 function that is executed first is a function defined in kalloc.c.

The xv6 kernel initializes the memory allocator by calling kinit1 and kinit2.

// Initialization happens in two phases.

// 1. main() calls kinit1() while still using entrypgdir to place just

// the pages mapped by entrypgdir on free list.

// 2. main() calls kinit2() with the rest of the physical pages

// after installing a full page table that maps them on all cores.

void

kinit1(void *vstart, void *vend)

{

initlock(&kmem.lock, "kmem");

kmem.use_lock = 0;

freerange(vstart, vend);

}

void

kinit2(void *vstart, void *vend)

{

freerange(vstart, vend);

kmem.use_lock = 1;

}At runtime, the kernel needs to allocate and free physical memory regions for page tables, processes, user memory, kernel stacks, pipe buffers, and so on.

In the case of xv6 OS, the physical memory from the end of the address where the kernel itself is stored up to PHYSTOP is used as the allocation region.

PHYSTOP is defined in memlayout.h as follows:

#define EXTMEM 0x100000 // Start of extended memory

#define PHYSTOP 0xE000000 // Top physical memory

#define DEVSPACE 0xFE000000 // Other devices are at high addressesThe allocation and deallocation of this memory region is done on a page-by-page basis.

The kernel maintains freed pages as a linked list, and when allocating, it removes that page from the list.

Reference: xv6 - DRAFT as of September 4, 2018 P32-33

Reference: Memory Management, Address Space, Page Tables

Arguments of kinit1 function

In kinit1, the allocator is initialized to make the region from the kernel’s end address received as an argument up to 4MiB available “without locks”.

From here until the kinit2 function is called, it operates in a state where addresses from the kernel end to 4MiB can be allocated without locks.

This appears to be because most of the processing called within the main function cannot use locks or memory regions larger than 4MiB.

The kinit1 function is given vstart and vend as arguments.

First, the argument corresponding to the first argument vstart is the following variable declared in main.c.

It has an extern declaration and acquires PROVIDE(end = .); defined in kernel.ld.

extern char end[]; // first address after kernel loaded from ELF fileAlso, P2V(4*1024*1024) is given to vend.

P2V is a macro defined in memlayout.h that simply converts a physical address to a virtual address (= simply adds KERNBASE).

#define V2P(a) (((uint) (a)) - KERNBASE)

#define P2V(a) ((void *)(((char *) (a)) + KERNBASE))When called, 0x80400000 is given, which is 4*1024*1024 (=4MiB) plus KERNBASE.

When I actually checked the arguments at the time of calling kinit1 with gdb, it looked like this:

kinit1 (vstart=0x801154a8, vend=0x80400000)The order is reversed, but in kinit1, these two values are given as arguments to the freerange function to secure the memory region.

Since I want to read the source code in order from the top, I’ll check the initlock function first.

initlock function

The initlock function is a function defined in spinlock.c that initializes the spinlock structure inside the kmem structure.

The kmem structure is defined in kalloc.c as follows:

struct {

struct spinlock lock;

int use_lock;

struct run *freelist;

} kmem;Also, the spinlock structure is the following structure defined in spinlock.h.

The comment says mutual exclusion, and it seems to be used when performing locks.

// Mutual exclusion lock.

struct spinlock {

uint locked; // Is the lock held?

// For debugging:

char *name; // Name of lock.

struct cpu *cpu; // The cpu holding the lock.

uint pcs[10]; // The call stack (an array of program counters)

// that locked the lock.

};The initlock function receives a pointer to this spinlock structure and a name string to initialize it.

void initlock(struct spinlock *lk, char *name)

{

lk->name = name;

lk->locked = 0;

lk->cpu = 0;

}When calling this function from kinit1, we can see that it’s initialized with the name “kmem”.

void kinit1(void *vstart, void *vend)

{

initlock(&kmem.lock, "kmem");

kmem.use_lock = 0;

freerange(vstart, vend);

}The next line also initializes the use_lock value to 0.

Here the lock is disabled.

In kinit2, which is called after a while, kmem.use_lock is set to 1.

Also, here we’ve only initialized kmem and no lock has been performed.

To perform a lock using the structure initialized here, we need to call the void acquire(struct spinlock *lk) function defined in spinlock.c.

We’ll check this function when it’s actually used.

Reference: Homework: xv6 locking

freerange function

The freerange function is a function defined in kalloc.c that takes vstart and vend as arguments and frees the physical address region aligned using PGROUNDUP.

void freerange(void *vstart, void *vend)

{

char *p;

p = (char*)PGROUNDUP((uint)vstart);

for(; p + PGSIZE <= (char*)vend; p += PGSIZE)

kfree(p);

}PGROUNDUP is defined in mmu.h as follows:

// Page directory and page table constants.

#define NPDENTRIES 1024 // # directory entries per page directory

#define NPTENTRIES 1024 // # PTEs per page table

#define PGSIZE 4096 // bytes mapped by a page

#define PTXSHIFT 12 // offset of PTX in a linear address

#define PDXSHIFT 22 // offset of PDX in a linear address

#define PGROUNDUP(sz) (((sz)+PGSIZE-1) & ~(PGSIZE-1))

#define PGROUNDDOWN(a) (((a)) & ~(PGSIZE-1))PGROUNDUP is a macro that aligns the address received as an argument to a multiple of PGSIZE.

This returns the smallest multiple of PGSIZE greater than or equal to argument sz.

~ is the Bitwise NOT operator.

Also, (PGSIZE-1) is the maximum remainder for PGSIZE, so by adding this and then taking the AND with the bit-inverted (pgsize-1), we can round down the fraction below PGSIZE to perform alignment.

Reference: c - What do PGROUNDUP and PGROUNDDOWN in xv6 mean? - Stack Overflow

The calculation was a bit difficult to understand, so I created a reversed Python script.

def rev_PGROUNDUP(sz):

print("***************rev_PGROUNDUP***************")

pgsize = 4096

num1 = (sz)+(pgsize-1)

num2 = ~(pgsize-1)

result = num1 & num2

print("pgsize : {}".format(pgsize))

print("pgsize[bin] : {}".format(bin(pgsize)))

print("(sz)+(pgsize-1) : {}".format(num1))

print("(sz)+(pgsize-1)[bin] : {}".format(bin(num1)))

print("~(pgsize-1) : {}".format(num2))

print("~(pgsize-1)[bin] : {}".format(bin(num2)))

print("result : {}".format(result))

print("result[bin] : {}".format(bin(result)))

return resultWhen this is executed, it looks like this:

rev_PGROUNDUP(100)

***************rev_PGROUNDUP***************

pgsize : 4096

pgsize[bin] : 0b1000000000000

(sz)+(pgsize-1) : 4195

(sz)+(pgsize-1)[bin] : 0b1000001100011

~(pgsize-1) : -4096

~(pgsize-1)[bin] : -0b1000000000000

result : 4096

result[bin] : 0b1000000000000When 100 is input as sz, it’s aligned and rounded up, resulting in a return value of 4096.

Let’s go back to the freerange function.

vstart is given as the argument to PGROUNDUP, and the return value is stored in p.

void freerange(void *vstart, void *vend)

{

char *p;

p = (char*)PGROUNDUP((uint)vstart);

for(; p + PGSIZE <= (char*)vend; p += PGSIZE)

kfree(p);

}Here, p becomes an address aligned by PGSIZE.

From here until reaching the address of vend, physical addresses are freed by the kfree function every PGSIZE (every page), and the page list is initialized.

kfree function

The kfree function frees memory and adds the freed page to the page list.

It’s defined in kalloc.c.

The starting address of the page to be freed is used as an argument.

When called from the kinit1 function, the value given as an argument is an address aligned by PGSIZE, so it’s guaranteed to be aligned on a 4096-byte boundary.

//PAGEBREAK: 21

// Free the page of physical memory pointed at by v,

// which normally should have been returned by a

// call to kalloc(). (The exception is when

// initializing the allocator; see kinit above.)

void kfree(char *v)

{

struct run *r;

if((uint)v % PGSIZE || v < end || V2P(v) >= PHYSTOP)

panic("kfree");

// Fill with junk to catch dangling refs.

memset(v, 1, PGSIZE);

if(kmem.use_lock)

acquire(&kmem.lock);

r = (struct run*)v;

r->next = kmem.freelist;

kmem.freelist = r;

if(kmem.use_lock)

release(&kmem.lock);

}When the function is called, it declares a run structure.

The run structure is defined in kalloc.c and is a singly-linked list that only has a reference to the next run structure.

It’s stored in kmem as a freelist that stores freed pages.

struct run {

struct run *next;

};

struct {

struct spinlock lock;

int use_lock;

struct run *freelist;

} kmem;The next line checks whether the address received as an argument is aligned by PGSIZE and that there is sufficient space for page size allocation.

If this check fails, the panic function is called.

if((uint)v % PGSIZE || v < end || V2P(v) >= PHYSTOP) panic("kfree");The panic function will be described later.

The next line fills the address region for PGSIZE from the page start address v with 1.

// Fill with junk to catch dangling refs.

memset(v, 1, PGSIZE);memset will also be described later.

After the memory region initialization by memset is completed, the following lines are executed:

if(kmem.use_lock) acquire(&kmem.lock);

r = (struct run*)v;

r->next = kmem.freelist;

kmem.freelist = r;

if(kmem.use_lock) release(&kmem.lock);During the execution of kinit1, locks are disabled, so acquire and release are not called.

Therefore, a new run structure for one page is added to the head of kmem.freelist, and the processing of kfree ends.

This completes the processing of the freerange function, so the processing of kinit1 also ends, and we proceed to execute the next function in main.c.

kinit2 function

After executing some kernel processing from kinit1, the kinit2 function is called.

The kinit2 function enables locks and secures a larger memory region.

The operation is almost the same as kinit1.

kinit2(P2V(4*1024*1024), P2V(PHYSTOP)); // must come after startothers()4MiB address is allocated to vstart, and 0xE000000, which is set as the end of the address region, is given to vend.

void kinit2(void *vstart, void *vend)

{

freerange(vstart, vend);

kmem.use_lock = 1;

}Except for the line kmem.use_lock = 1;, it’s the same as kinit1.

After freeing the region from vstart to vend with freerange and adding it to the page list, locks are enabled and it ends.

After this, since locks are enabled, the acquire and release functions will be called for memory allocation and deallocation.

Both the acquire and release functions are defined in spinlock.c.

About locks in xv6

Before reading the acquire and release functions, let’s check the lock specification in xv6.

xv6 OS operates in a multiprocessor environment.

In a multiprocessor environment, multiple CPUs share physical memory resources.

Therefore, locks are a mechanism to prevent problems or conflicts caused by each CPU’s processing arbitrarily rewriting shared resources.

By performing exclusive control of arbitrary memory regions through locks, we can make that memory region operable by only one CPU.

Reference: xv6

In xv6 OS, two types of locks are implemented: spin locks and sleep locks.

The locks performed by the acquire and release functions are spin locks.

Reference: Spinlock - Wikipedia

With spin locks, when a process tries to secure a memory resource, if the target is already locked, it loops and waits until the lock is released.

acquire function

The acquire function takes a pointer to a spinlock structure as an argument and performs a lock.

What we want to do here is simple: attempt to set 1 to locked in the spinlock structure, and loop the processing to wait until the lock can be secured.

// Acquire the lock.

// Loops (spins) until the lock is acquired.

// Holding a lock for a long time may cause

// other CPUs to waste time spinning to acquire it.

void acquire(struct spinlock *lk)

{

pushcli(); // disable interrupts to avoid deadlock.

if(holding(lk))

panic("acquire");

// The xchg is atomic.

while(xchg(&lk->locked, 1) != 0);

// Tell the C compiler and the processor to not move loads or stores

// past this point, to ensure that the critical section's memory

// references happen after the lock is acquired.

__sync_synchronize();

// Record info about lock acquisition for debugging.

lk->cpu = mycpu();

getcallerpcs(&lk, lk->pcs);

}Let me also repost the definition of the spinlock structure.

// Mutual exclusion lock.

struct spinlock {

uint locked; // Is the lock held?

// For debugging:

char *name; // Name of lock.

struct cpu *cpu; // The cpu holding the lock.

uint pcs[10]; // The call stack (an array of program counters)

// that locked the lock.

};Let’s look at the processing of the acquire function in order.

pushcli(); // disable interrupts to avoid deadlock.

if(holding(lk)) panic("acquire");The pushcli function is a function defined in spinlock.c.

Like cli, it suppresses interrupts, but here it implements nested interrupt disable instructions using a stack.

This processing prevents deadlocks that would occur if locks were held with interrupts enabled when securing locks with xchg later.

To resume interrupts, call the popcli function.

The popcli function is not called in the acquire function.

The popcli function corresponding to the pushcli function executed in the acquire function is executed by the release function.

// Pushcli/popcli are like cli/sti except that they are matched:

// it takes two popcli to undo two pushcli. Also, if interrupts

// are off, then pushcli, popcli leaves them off.

void pushcli(void)

{

int eflags;

eflags = readeflags();

cli();

if(mycpu()->ncli == 0) mycpu()->intena = eflags & FL_IF;

mycpu()->ncli += 1;

}

void popcli(void)

{

if(readeflags()&FL_IF)

panic("popcli - interruptible");

if(--mycpu()->ncli < 0)

panic("popcli");

if(mycpu()->ncli == 0 && mycpu()->intena)

sti();

}The next holding function checks whether the CPU is locked.

// Check whether this cpu is holding the lock.

int holding(struct spinlock *lock)

{

int r;

pushcli();

r = lock->locked && lock->cpu == mycpu();

popcli();

return r;

}mycpu() is a function defined in proc.c.

xv6 OS manages a cpu structure for each CPU.

The cpu structure is defined in proc.h as follows, and various information is stored, such as the process currently executing on that CPU and the number of nested spin locks.

// Per-CPU state

struct cpu {

uchar apicid; // Local APIC ID

struct context *scheduler; // swtch() here to enter scheduler

struct taskstate ts; // Used by x86 to find stack for interrupt

struct segdesc gdt[NSEGS]; // x86 global descriptor table

volatile uint started; // Has the CPU started?

int ncli; // Depth of pushcli nesting.

int intena; // Were interrupts enabled before pushcli?

struct proc *proc; // The process running on this cpu or null

};The mycpu function returns a pointer to reference the cpu structure of the current CPU.

Interrupts must be disabled before calling.

In other words, if(holding(lk)) panic("acquire"); references the spinlock structure in the argument and confirms that the CPU is locked.

Next, let’s check the lock setting.

// The xchg is atomic.

while(xchg(&lk->locked, 1) != 0);Here, to prevent conflicts during lock operations, the operation needs to be atomic.

xchg is a special x86 instruction to achieve this.

If a lock is set, xchg(&lk->locked, 1) returns 1 and the loop continues.

If the lock is released, a new lock is set by xchg.

After that, the loop ends and the lock is secured.

Reference: OSDev.org • View topic - How can xchg return a value?

// Tell the C compiler and the processor to not move loads or stores

// past this point, to ensure that the critical section's memory

// references happen after the lock is acquired.

__sync_synchronize();

// Record info about lock acquisition for debugging.

lk->cpu = mycpu();

getcallerpcs(&lk, lk->pcs);Next, the __sync_synchronize function is a built-in function that performs a memory barrier.

Reference: c++ - What does _syncsynchronize do? - Stack Overflow

Then, the cpu structure acquired by the mycpu function is stored in lk->cpu.

Finally, the getcallerpcs function is called.

This stores the call stack for debugging.

// Record the current call stack in pcs[] by following the %ebp chain.

void getcallerpcs(void *v, uint pcs[])

{

uint *ebp;

int i;

ebp = (uint*)v - 2;

for(i = 0; i < 10; i++){

if(ebp == 0 || ebp < (uint*)KERNBASE || ebp == (uint*)0xffffffff)

break;

pcs[i] = ebp[1]; // saved %eip

ebp = (uint*)ebp[0]; // saved %ebp

}

for(; i < 10; i++)

pcs[i] = 0;

}It seems the information output when the panic function is called is stored here.

release function

For the release function, there are no special points to note beyond what has been described so far, so I’ll omit the details.

// Release the lock.

void release(struct spinlock *lk)

{

if(!holding(lk)) panic("release");

lk->pcs[0] = 0;

lk->cpu = 0;

// Tell the C compiler and the processor to not move loads or stores

// past this point, to ensure that all the stores in the critical

// section are visible to other cores before the lock is released.

// Both the C compiler and the hardware may re-order loads and

// stores; __sync_synchronize() tells them both not to.

__sync_synchronize();

// Release the lock, equivalent to lk->locked = 0.

// This code can't use a C assignment, since it might

// not be atomic. A real OS would use C atomics here.

asm volatile("movl $0, %0" : "+m" (lk->locked) : );

popcli();

}The last line asm volatile("movl $0, %0" : "+m" (lk->locked) : ); sets 0 to locked and releases the lock.

Bonus: Calling the panic function with the debugger

The panic function is defined in console.c.

It seems to disable CPU interrupts and generate an infinite loop so that no further processing occurs.

void panic(char *s)

{

int i;

uint pcs[10];

cli();

cons.locking = 0;

// use lapiccpunum so that we can call panic from mycpu()

cprintf("lapicid %d: panic: ", lapicid());

cprintf(s);

cprintf("\n");

getcallerpcs(&s, pcs);

for(i=0; i<10; i++)

cprintf(" %p", pcs[i]);

panicked = 1; // freeze other CPU

for(;;)

;

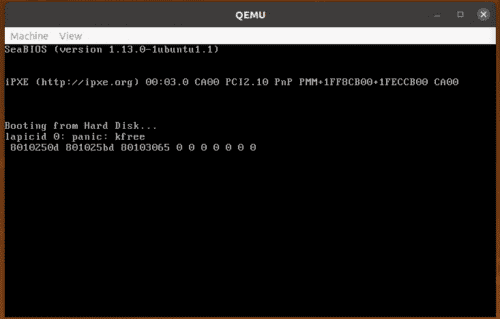

}To see what’s actually output, I decided to trigger a panic with gdb.

Execute the following commands in gdb in order:

# Connect to QEMU and set a breakpoint just before calling the panic function

$ target remote localhost:26000

$ b *0x80102499

$ continue

# Tamper with the flags register to call the panic function and execute

$ info registers eflags

eflags 0x93 [ IOPL=0 SF AF CF ]

$ set $CF = 0

$ set $eflags ^= (1 << $CF)

$ info registers eflags

eflags 0x92 [ IOPL=0 SF AF ]

$ continueWhen I checked the QEMU console, I could see that panic was called when the kfree function was called.

The addresses displayed on the last line look like the return address stack.

Bonus: About memset

memset is defined in string.c as follows:

void* memset(void *dst, int c, uint n)

{

if ((int)dst%4 == 0 && n%4 == 0){

c &= 0xFF;

stosl(dst, (c<<24)|(c<<16)|(c<<8)|c, n/4);

} else

stosb(dst, c, n);

return dst;

}memset is a function that can write values to arbitrary memory regions, but it seems to have a different implementation from the following glibc memset.

void *

inhibit_loop_to_libcall

memset (void *dstpp, int c, size_t len)

{

long int dstp = (long int) dstpp;

if (len >= 8)

{

size_t xlen;

op_t cccc;

cccc = (unsigned char) c;

cccc |= cccc << 8;

cccc |= cccc << 16;

if (OPSIZ > 4)

/* Do the shift in two steps to avoid warning if long has 32 bits. */

cccc |= (cccc << 16) << 16;

/* There are at least some bytes to set.

No need to test for LEN == 0 in this alignment loop. */

while (dstp % OPSIZ != 0)

{

((byte *) dstp)[0] = c;

dstp += 1;

len -= 1;

}

/* Write 8 `op_t' per iteration until less than 8 `op_t' remain. */

xlen = len / (OPSIZ * 8);

while (xlen > 0)

{

((op_t *) dstp)[0] = cccc;

((op_t *) dstp)[1] = cccc;

((op_t *) dstp)[2] = cccc;

((op_t *) dstp)[3] = cccc;

((op_t *) dstp)[4] = cccc;

((op_t *) dstp)[5] = cccc;

((op_t *) dstp)[6] = cccc;

((op_t *) dstp)[7] = cccc;

dstp += 8 * OPSIZ;

xlen -= 1;

}

len %= OPSIZ * 8;

/* Write 1 `op_t' per iteration until less than OPSIZ bytes remain. */

xlen = len / OPSIZ;

while (xlen > 0)

{

((op_t *) dstp)[0] = cccc;

dstp += OPSIZ;

xlen -= 1;

}

len %= OPSIZ;

}

/* Write the last few bytes. */

while (len > 0)

{

((byte *) dstp)[0] = c;

dstp += 1;

len -= 1;

}

return dstpp;

}Reference: glibc/memset.c at master · lattera/glibc

memset can write specific values to arbitrary address ranges at high speed, and is used for initialization, etc.

It seems to be implemented to operate faster than a simple for loop implementation.

Reference: c++ - Memset Definition and use - Stack Overflow

The xv6 memset is a much simpler implementation than the glibc one, but it seems to be implemented to operate faster than a for loop by using the stosb and stosl instructions.

Let’s actually check that memset is working using gdb.

First, set a breakpoint just before executing memset.

$ target remote localhost:26000

$ b memset

$ continueSince we set a breakpoint, when I looked at the contents from the page start address for 0x1000 bytes, everything was 0.

# View the memory state just before executing memset

$ x/1000 0x80116000

0x80116000:0x000000000x000000000x000000000x00000000

{ omitted }

0x80116f90:0x000000000x000000000x000000000x00000000Next, when I looked at the same memory region just after memset finished, I could confirm that all byte values were 1. (It’s hard to tell because the output is separated every 4 bytes.)

# View the memory state just after executing memset

$ finish

$ $ x/1000 0x80116000

0x80116000:0x010101010x010101010x010101010x01010101

{ omitted }

0x80116f90:0x010101010x010101010x010101010x01010101Summary

We’ve finally progressed to the kernel itself.

However, reading just the first function of the main function has become quite voluminous, so I’ll compile the continuation in the next article.