This page has been machine-translated from the original page.

In the previous article, Search Algorithms Powering AntiVirus 1, I looked at an implementation of the Aho–Corasick algorithm and how it is used in ClamAV.

This time, again using ClamAV’s code as a base, I will summarize Boyer–Moore (BM), Wu-Manber (WM), and related techniques that are applied to signature pattern matching.

Table of Contents

(Background) Pattern Matching in ClamAV (Recap)

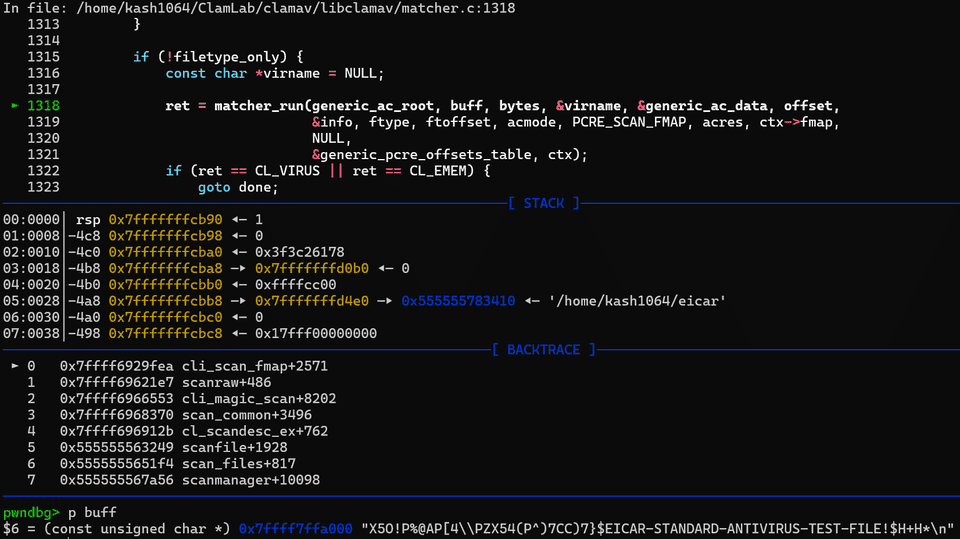

As mentioned in the previous article, ClamAV’s core file-scanning function, cli_scan_fmap, is, simply put, a function that scans a memory map abstracted as the cl_fmap structure.

Inside it, processing such as hash-based checks and pattern matching against the loaded signature database is performed.

After performing scan initialization, the cli_scan_fmap function reads up to SCANBUFF (0x20000 bytes) from the file map as one chunk and then scans it with the matcher_run function.

This matcher_run function is called with the following parameters and scans the received buffer.

static inline cl_error_t matcher_run(

const struct cli_matcher *root,

const unsigned char *buffer, uint32_t length,

const char **virname, struct cli_ac_data *mdata,

uint32_t offset,

const struct cli_target_info *tinfo,

cli_file_t ftype,

struct cli_matched_type **ftoffset,

unsigned int acmode,

unsigned int pcremode,

struct cli_ac_result **acres,

fmap_t *map,

struct cli_bm_off *offdata,

struct cli_pcre_off *poffdata,

cli_ctx *ctx

)Here, the cli_bm_scanbuff function first performs scanning with a Boyer-Moore-based algorithm, after which the cli_ac_scanbuff function performs pattern matching with the Aho-Corasick algorithm.

I covered the cli_ac_scanbuff function in the previous article, so this time I will focus on scanning with the Boyer-Moore method through the cli_bm_scanbuff function.

What Is the Boyer–Moore (BM) Algorithm

The BM algorithm is a fast algorithm for searching whether a specified keyword (signature) is contained in a particular text.

The basic idea of the BM algorithm is to preprocess the keyword pattern and achieve fast keyword search by comparing characters from the end of the keyword toward the beginning and by avoiding unnecessary checks as much as possible when testing whether the target string matches the keyword.

The standard BM algorithm generally refers to the version that applies the two rules described below: the Bad Character Rule and the Good Suffix Rule.

This standard BM algorithm has a worst-case time complexity of O(N*M) (text length N × keyword length M), but it seems that by adding the rule known as the Galil rule, the worst-case complexity can be reduced to linear time.

There is also a derivative of the BM algorithm called the Boyer–Moore–Horspool (BMH) algorithm, which uses only the Bad Character Rule and further simplifies BM.

In signature pattern matching for AntiVirus software such as ClamAV, this Boyer–Moore–Horspool (BMH) method is apparently used in some cases for several reasons, so in this article I will mainly focus on BMH.

Bad Character Rule

In the BM algorithm, the Bad Character Rule is a rule for skipping unnecessary checks based on where a character that mismatched the keyword appears inside the keyword.

The diagram in the following article was especially easy to understand for the Bad Character Rule.

Reference: 文字列検索アルゴリズム③ ー Boyer-Moore法 #競技プログラミング - Qiita

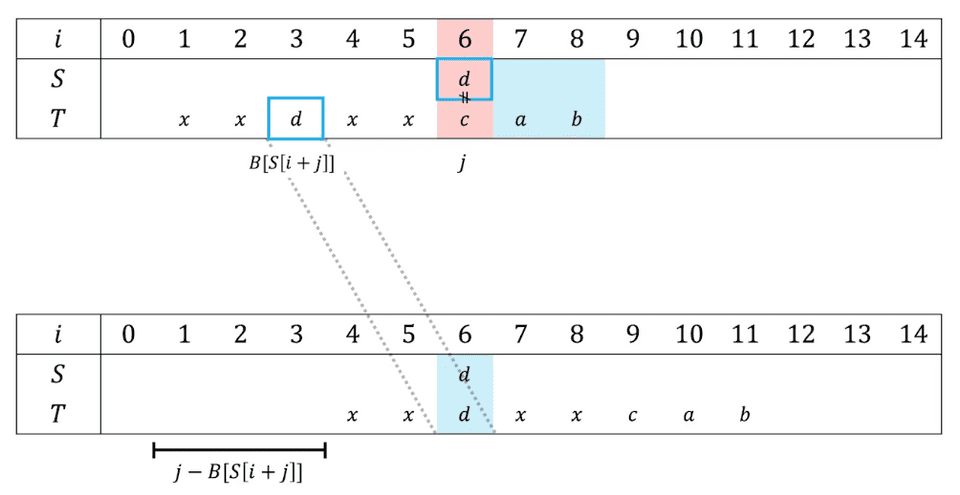

When applying the BM algorithm, after aligning the beginning of keyword T with the current search position in the target string S, you compare the target string and the keyword starting from the word at the end of the keyword.

When a mismatch occurs between the target character and the keyword character, the Bad Character Rule makes string search efficient by shifting the next search position as far as possible.

With the Bad Character Rule, a shift table is created in advance by preprocessing the search keyword (that is, how many characters the search position can be shifted when a mismatch occurs), and the search position is skipped as much as possible depending on the character that mismatched at the end.

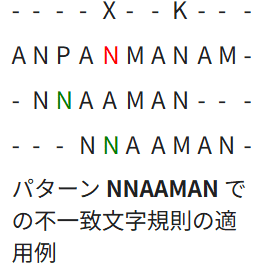

For example, in the example explained on Wikipedia, the keyword NNAAMAN and the text are compared from the end, and the rule is evaluated at the position of the mismatched N.

If this N also appears to the left of that position, the search position is skipped to that occurrence. If the character does not appear in the keyword at all, then the keyword can no longer match there, so the search position is skipped to the next character after it.

Reference: ボイヤー-ムーア文字列検索アルゴリズム - Wikipedia

Implementing the Bad Character Rule

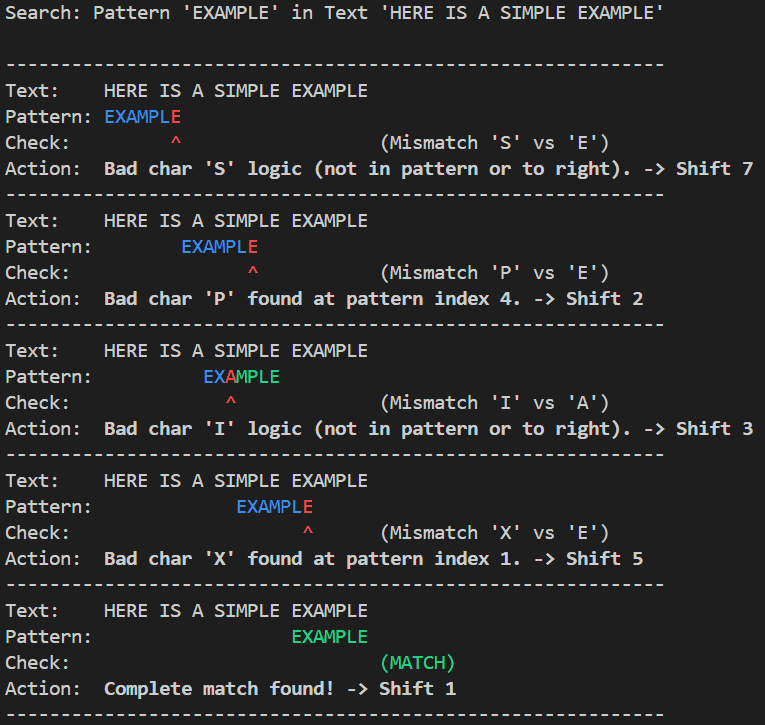

To deepen my understanding of string search with the Bad Character Rule, I created a Python script that visualizes each search step.

In the BMSearcher class in the following script, the search keyword is preprocessed to build a Bad Character Table.

class BMSearcher:

def __init__(self, pattern: str):

self.pattern = pattern

self.m = len(pattern)

# Build the Bad Character Table

self._bad_char_table: Dict[str, int] = self._build_bad_char_table()

def _build_bad_char_table(self) -> Dict[str, int]:

# Skip table: Dict[str, int]

# Characters in the search keyword and the index of the last occurrence of each character in the keyword

table = {}

for i, char in enumerate(self.pattern):

table[char] = i

return tableThe Bad Character Table is defined as a dictionary whose keys are characters contained in the search keyword and whose values indicate the index where that character last appears (the rightmost occurrence) in the keyword.

This makes it possible to determine how far the next search position can be skipped when a character mismatch occurs during the search.

The code that implements the search process above is as follows.

@dataclass(frozen=True)

class SearchStep:

shift: int # Shift position

mismatch_index: Optional[int] # Index where the mismatch occurred (relative position from the end)

bad_char: Optional[str] # The mismatched character

shift_amount: int # Shift amount for the next step

description: str # Description

class BMSearcher:

def __init__(self, pattern: str):

self.pattern = pattern

self.m = len(pattern)

# Build the Bad Character Table

self._bad_char_table: Dict[str, int] = self._build_bad_char_table()

def _build_bad_char_table(self) -> Dict[str, int]:

# Skip table: Dict[str, int]

# Characters in the search keyword and the index of the last occurrence of each character in the keyword

table = {}

for i, char in enumerate(self.pattern):

table[char] = i

return table

# Generator that returns the search state so the process can be visualized

def search_generator(self, text: str) -> Iterator[SearchStep]:

n = len(text)

shift = 0

while shift <= n - self.m:

j = self.m - 1

# Compare the keyword and the text from the end toward the beginning

while j >= 0 and self.pattern[j] == text[shift + j]:

j -= 1

# If the string matches completely

if j < 0:

yield SearchStep(

shift=shift,

mismatch_index=None,

bad_char=None,

shift_amount=1,

description="Complete match found!"

)

shift += 1

# If a mismatch occurs during the search

else:

text_char = text[shift + j]

# Bad Character Rule formula: shift_amount = max(1, mismatch_index - last_occurrence_index)

last_occurrence = self._bad_char_table.get(text_char, -1)

# Shift amount for skipping (shift by at least 1 to avoid an infinite loop)

shift_amount = max(1, j - last_occurrence)

reason = (

f"Bad char '{text_char}' found at pattern index {last_occurrence}."

if last_occurrence != -1 and last_occurrence < j

else f"Bad char '{text_char}' logic (not in pattern or to right)."

)

yield SearchStep(

shift=shift,

mismatch_index=j,

bad_char=text_char,

shift_amount=shift_amount,

description=reason

)

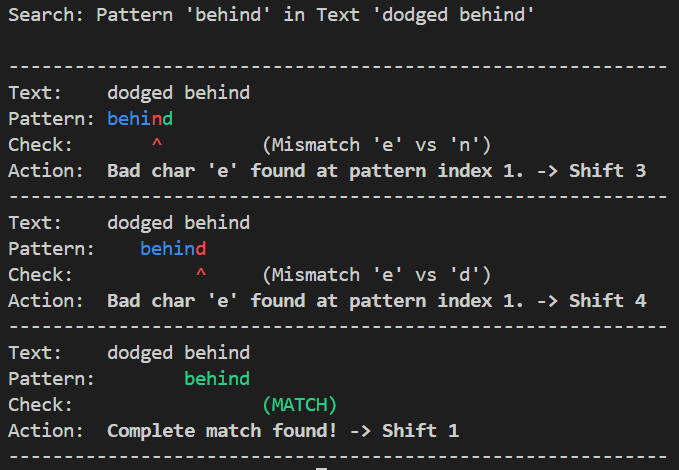

shift += shift_amountWhen you run a script based on the above, you can see character search from the end using the Bad Character Rule and how the search position shifts when a mismatch occurs, as shown below.

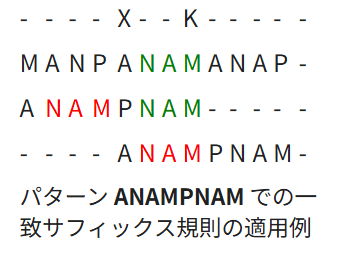

Good Suffix Rule

The Good Suffix Rule is an additional rule used to search more efficiently than the Bad Character Rule alone.

At a high level, the idea of the Good Suffix Rule is that if a suffix matched when evaluating from the end, that matched portion is treated as a good suffix and used to determine the next search position, enabling more efficient scanning.

Reference: ボイヤー-ムーア文字列検索アルゴリズム - Wikipedia

In general, the algorithm that uses both the Bad Character Rule and the Good Suffix Rule is regarded as the standard BM algorithm.

In some cases, the rule called the Galil rule is added as well.

However, although the standard BM algorithm with the Good Suffix heuristic, or the BM algorithm extended with the Galil rule to guarantee linear-time search, is theoretically more efficient, there seem to be cases where it is not suitable in practice because of implementation complexity and the cost of preprocessing.

For that reason, in use cases that require implementation simplicity and good average speed—such as general text editor search functions and AntiVirus pattern matching like the target of this article—it seems common to use BM with only the Bad Character Rule, the simplified Boyer–Moore–Horspool (BMH) algorithm, or algorithms based on it.

Note: In the case of AntiVirus software such as ClamAV, it appears that processing closer to the Wu-Manber method is used rather than a simple BMH algorithm, in order to search multiple patterns simultaneously.

Reference: AFASTALGORITHMFORMULTI-PATTERNSEARCHING

Reference: Practical Fast Searching in Strings

Reference: Horspool algorithm

Boyer–Moore–Horspool (BMH)

As described above, the Boyer–Moore–Horspool (BMH) algorithm is a simplified algorithm based on BM with the Good Suffix Rule removed.

For the BM algorithm extended with the Galil rule, the worst-case time complexity of string-search matching is guaranteed to be O(N), so in theory it is regarded as faster than the BMH algorithm, which is O(NM).

However, in ClamAV, a BMH-based algorithm is used for pattern matching.

In practice, although the BM algorithm including the Good Suffix Rule and the Galil rule is theoretically faster than BMH in terms of worst-case time complexity, if you also take preprocessing cost into account, BMH can provide better average performance in some use cases, so it is not unusual for applications to adopt BMH instead of BM.

Reference: Boyer-Moore vs Boyer-Moore-Horspool Search Algorithms | Search Algorithms | StudyPlan.dev

Reference: Boyer-Moore algorithm - コードの恵み

The clearest explanation I found of the difference between BMH and the BM algorithm’s Bad Character Rule was on the following page.

Reference: Algorithms with Python / 文字列の探索

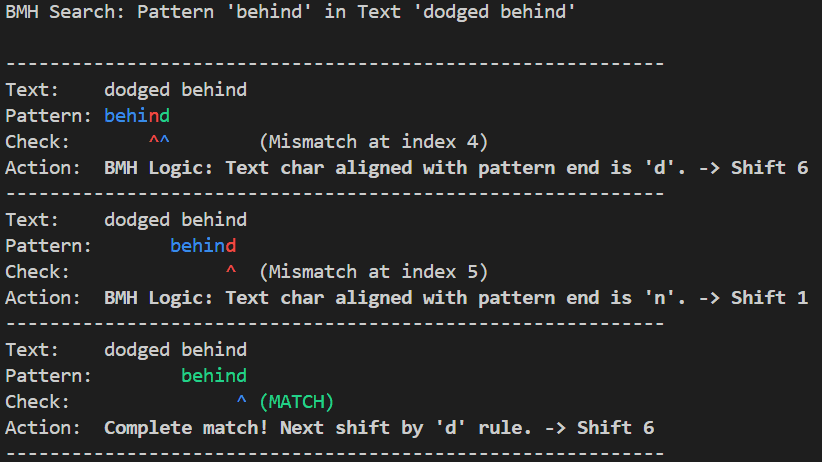

Put simply, BMH is a technique that always focuses on the last character when a pattern mismatch occurs and skips the search position as much as possible.

As mentioned earlier, in the Bad Character Rule, when a mismatch occurs, the character contained in the search keyword is used as a key, and skipping is performed based on a dictionary that indicates the index where that character last appears (the rightmost occurrence) in the keyword.

In other words, as in the example below, if the mismatch occurs at the second character from the end, e, during the first search, the Bad Character Rule performs the next matching step by aligning the position of the mismatched e.

On the other hand, when using the BMH algorithm, regardless of where the mismatch occurs, the algorithm focuses on the last character and slides the search position so that the last character aligns with the rightmost occurrence of that character within the keyword.

(As in the example below, if the last character d is not included in the keyword, the check is performed by aligning the beginning of the keyword with the next character.)

Because of this, when using the BMH algorithm there is no need to consider where the check failed, and only the last character needs to be examined. This makes the implementation simpler than the Bad Character Rule and also allows the search position to be shifted farther than with the Bad Character Rule.

The result of implementing the table-building process used by BMH in Python is as follows.

class BMHSearcher:

def __init__(self, pattern: str):

self.pattern = pattern

self.m = len(pattern)

self._shift_table: Dict[str, int] = self._build_shift_table()

def _build_shift_table(self) -> Dict[str, int]:

table = {}

# Register all characters except the last character in the pattern (from 0 to m-2)

for i in range(self.m - 1):

char = self.pattern[i]

table[char] = self.m - 1 - i

return tableThis is almost the same as the Bad Character Rule implementation, but the difference is that the information stored in the table is not the index where a character in the keyword last appears in the keyword, but rather the distance from the final character.

Wu-Manber (WM)

The Wu-Manber (WM) method is a search algorithm designed to search multiple patterns at the same time based on the idea of the BMH algorithm.

The BMH idea of trying to shift as far as possible by using a mismatch at the last character becomes more efficient as the probability rises that the last character does not appear in the keyword.

However, if the number of patterns to search increases, the probability that some character in the pattern set matches the last character also increases, and eventually the algorithm converges to a state where a large shift width can no longer be obtained.

To solve this multi-pattern search problem, the WM method evaluates and skips search positions based not on a single character but usually on blocks of 2 or 3 characters.

In the WM method, in the preprocessing stage, the minimum pattern length in the pattern set is taken as m, the block size—usually 2 or 3—is taken as B, and three tables, SHIFT, HASH, and PREFIX, are created using the first m characters of each pattern.

The SHIFT table is an extension of the BM shift table and is used during text scanning to determine how many characters in the text can be skipped.

The HASH table contains pointers to lists of patterns that share the same suffix (the last B characters of the string obtained by taking the first m characters of the pattern), and the PREFIX table contains the hash value of each pattern’s prefix (the first B characters). These are used during pattern matching.

I implemented this preprocessing in Python as follows.

class WuManber:

def __init__(self, patterns: List[str], block_size: int = 2) -> None:

if not patterns:

raise ValueError("Invalid patterns.")

self.patterns = patterns

self.block_size = block_size

self.min_len = min(len(p) for p in patterns)

if self.min_len < self.block_size:

raise ValueError(f"Block size {self.block_size} is larger than the minimum pattern length {self.min_len}.")

# SHIFT table

self.shift_table: Dict[str, int] = {}

# HASH table

self.hash_table: Dict[str, List[int]] = defaultdict(list)

# PREFIX table / Python's built-in hash() returns an integer, so use it as is

self.prefix_table: List[int] = []

self._build_tables()

def _get_hash(self, text_block: str) -> int:

return hash(text_block)

def _build_tables(self) -> None:

for idx, pattern in enumerate(self.patterns):

prefix_part = pattern[:self.block_size]

self.prefix_table.append(self._get_hash(prefix_part))

limit_pattern = pattern[:self.min_len]

# shift_table: {'ap': 3, 'pp': 2, 'pl': 1, 'le': 0, 'am': 3}

for i in range(self.min_len - self.block_size + 1):

block = limit_pattern[i : i + self.block_size]

shift = self.min_len - 1 - (i + self.block_size - 1)

if block not in self.shift_table:

self.shift_table[block] = shift

else:

self.shift_table[block] = min(self.shift_table[block], shift)

if shift == 0:

self.hash_table[block].append(idx)First, in the initialization process below, various tables are created using patterns, the list of search keywords, and the default block size of 2.

def __init__(self, patterns: List[str], block_size: int = 2) -> None:

if not patterns:

raise ValueError("Invalid patterns.")

self.patterns = patterns

self.block_size = block_size

self.min_len = min(len(p) for p in patterns)

if self.min_len < self.block_size:

raise ValueError(f"Block size {self.block_size} is larger than the minimum pattern length {self.min_len}.")

# SHIFT table

self.shift_table: Dict[str, int] = {}

# HASH table

self.hash_table: Dict[str, List[int]] = defaultdict(list)

# PREFIX table / Python's built-in hash() returns an integer, so use it as is

self.prefix_table: List[int] = []

self._build_tables()At this point, the length of the shortest pattern among all patterns is stored as min_len. (If this min_len is smaller than the block size, search using the standard WM method cannot be used.)

The _build_tables method that actually creates the tables is implemented as follows.

def _build_tables(self) -> None:

for idx, pattern in enumerate(self.patterns):

prefix_part = pattern[:self.block_size]

self.prefix_table.append(self._get_hash(prefix_part))

limit_pattern = pattern[:self.min_len]

for i in range(self.min_len - self.block_size + 1):

block = limit_pattern[i : i + self.block_size]

shift = self.min_len - 1 - (i + self.block_size - 1)

if block not in self.shift_table:

self.shift_table[block] = shift

else:

self.shift_table[block] = min(self.shift_table[block], shift)

if shift == 0:

self.hash_table[block].append(idx)Here, the code loops over the list of patterns. First, it appends to the PREFIX table the integer value produced by hashing the first B characters of the pattern (pattern[:self.block_size]) with Python’s built-in hash() function.

Note: This makes it possible to retrieve the hash of the leading block from the PREFIX table using the pattern index.

Next, after storing the first m characters of the pattern as limit_pattern = pattern[:self.min_len], the code runs a loop for m - B + 1 iterations and creates the SHIFT table there.

Here, blocks of length B are taken out one by one from the first m characters of each pattern, and a safely shiftable amount is added to the SHIFT table for each block.

If a block is the last block of the pattern of length m (shift == 0), the pattern index is added to the list keyed by that block, creating the HASH table.

block = limit_pattern[i : i + self.block_size]

shift = self.min_len - 1 - (i + self.block_size - 1)

if block not in self.shift_table:

self.shift_table[block] = shift

else:

self.shift_table[block] = min(self.shift_table[block], shift)

if shift == 0:

self.hash_table[block].append(idx)With this, all the tables needed to perform search with the WM method have been created.

Next, let’s look at the text search process (search function) that uses these tables.

def search(self, text: str) -> Iterator[Tuple[int, str]]:

n = len(text)

m = self.min_len

B = self.block_size

if n < m:

return

idx = m - 1

default_shift = m - B + 1

while idx < n:

# Obtain the suffix block and determine the shift amount

suffix_block = text[idx - B + 1 : idx + 1]

shift = self.shift_table.get(suffix_block, default_shift)

# If the shift amount is 0, compare with candidate patterns (otherwise shift)

if shift == 0:

candidate_indices = self.hash_table.get(suffix_block, [])

text_start_pos = idx - m + 1

text_prefix_block = text[text_start_pos : text_start_pos + B]

text_prefix_hash = self._get_hash(text_prefix_block)

for p_idx in candidate_indices:

if self.prefix_table[p_idx] != text_prefix_hash:

continue

pattern = self.patterns[p_idx]

p_len = len(pattern)

if text_start_pos + p_len <= n:

if text[text_start_pos : text_start_pos + p_len] == pattern:

yield (text_start_pos, pattern)

idx += 1

else:

idx += shiftWhen the search function is called, it first takes m characters from the text, extracts the last B characters as suffix_block, and compares them with the SHIFT table.

If the last B characters do not exist in the SHIFT table, the search position is moved by m - B + 1. If they do exist in the SHIFT table, the search position is moved by the shift amount stored in the table.

If the shift amount is 0, there is a possibility that a pattern is contained at the current search position, so the list of pattern indices whose suffix includes that block is extracted from the HASH table as candidate_indices.

Next, after retrieving from the PREFIX table the hash of the leading block of the patterns whose suffix contains that block, the code compares it with the hash of the leading block in the text at the current search position. If they match, it performs a full-text comparison against the pattern.

The reason for doing prefix matching with the PREFIX table here seems to be that when searching for words in English text, suffix blocks shared by many words, such as tion or ee, may match frequently.

Therefore, by checking not only the suffix block but also the leading block, and then performing a full-text search only for the candidates that pass both checks, the process can be made more efficient.

Reference: AFASTALGORITHMFORMULTI-PATTERNSEARCHING

Reference: Variations of Wu-Manber String Matching Algorithm

Reference: wumanber/wumanber.hpp at master · bubiche/wu_manber

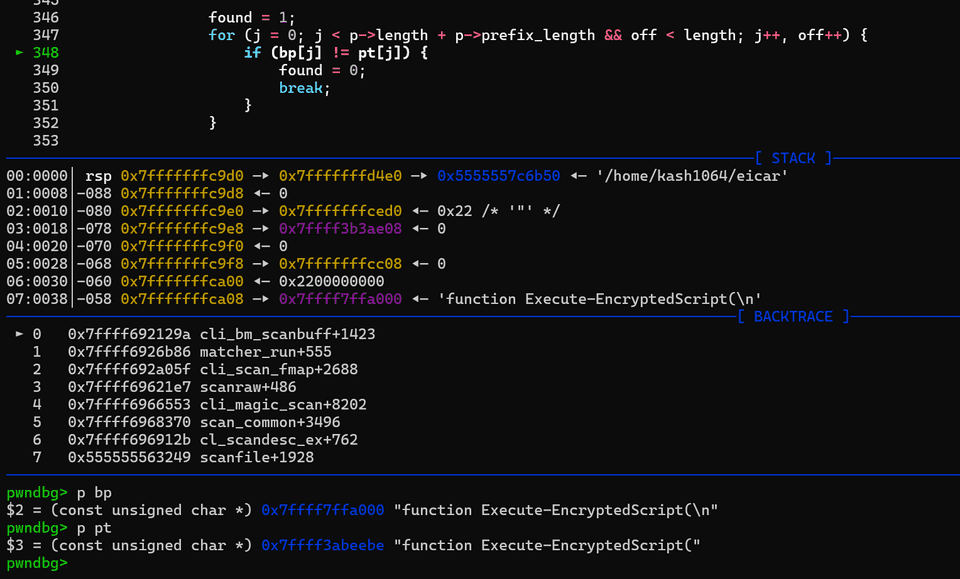

Reading ClamAV’s Implementation

Up to this point, I have summarized the BM algorithm, the BMH algorithm, and the WM method, which supports multi-pattern matching based on the same idea.

From here, I will read the actual implementation of ClamAV’s cli_bm_scanbuff function and examine how pattern matching is implemented in AntiVirus software.

Function Calls

The bm in the function name cli_bm_scanbuff stands for the BM algorithm. It is implemented as “Extended Boyer-Moore,” and its actual behavior is close to the WM method.

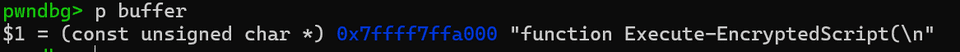

The cli_bm_scanbuff function is called with the following parameters, and when scanning files with clamscan, the data from the scanned file is passed in as buffer.

cl_error_t cli_bm_scanbuff(

const unsigned char *buffer,

uint32_t length,

const char **virname,

const struct cli_bm_patt **patt,

const struct cli_matcher *root,

uint32_t offset,

const struct cli_target_info *info,

struct cli_bm_off *offdata,

cli_ctx *ctx

);Building Tables in Preprocessing

Because ClamAV’s “Extended Boyer-Moore” behaves in practice much like the WM method, preprocessing is needed to create tables such as the SHIFT table and HASH table.

These tables are not created in cli_bm_scanbuff; instead, they appear to be stored in the cli_matcher object passed in as root.

struct cli_matcher {

unsigned int type;

/* Extended Boyer-Moore */

uint8_t *bm_shift;

struct cli_bm_patt **bm_suffix, **bm_pattab;

uint32_t *soff, soff_len; /* for PE section sigs */

uint32_t bm_offmode, bm_patterns, bm_reloff_num, bm_absoff_num;

/* HASH */

struct cli_hash_patt hm;

struct cli_hash_wild hwild;

/* Extended Aho-Corasick */

uint32_t ac_partsigs, ac_nodes, ac_lists, ac_patterns, ac_lsigs;

struct cli_ac_lsig **ac_lsigtable;

struct cli_ac_node *ac_root, **ac_nodetable;

struct cli_ac_list **ac_listtable;

struct cli_ac_patt **ac_pattable;

struct cli_ac_patt **ac_reloff;

uint32_t ac_reloff_num, ac_absoff_num;

uint8_t ac_mindepth, ac_maxdepth;

struct filter *filter;

uint16_t maxpatlen;

uint8_t ac_only;

{omitted}

};What corresponds to the SHIFT table in the WM method is bm_shift, and its values are set for each pattern to be matched by the cli_bm_addpatt function.

Scan Processing

The scan processing in cli_bm_scanbuff is implemented as shown below, in the same way as the WM scan described earlier.

It extracts 3 characters at a time from buffer, the data to be scanned, hashes them into an integer index with a custom macro, and checks the SHIFT table.

#define BM_MIN_LENGTH 3

#define BM_BLOCK_SIZE 3

#define HASH(a, b, c) (211 * a + 37 * b + c)

{omitted}

i = BM_MIN_LENGTH - BM_BLOCK_SIZE;

for (; i < length - BM_BLOCK_SIZE + 1;) {

idx = HASH(buffer[i], buffer[i + 1], buffer[i + 2]);

shift = root->bm_shift[idx];

if (shift == 0) {

prefix = buffer[i - BM_MIN_LENGTH + BM_BLOCK_SIZE];

p = root->bm_suffix[idx];

if (p && p->cnt == 1 && p->pattern0 != prefix) {

{omitted}Furthermore, when the SHIFT table’s shift amount is 0, ClamAV checks the PREFIX table and HASH table, compares the buffer with the pattern, and if they match, ultimately returns CL_VIRUS to report a detection.

Summary

Following the previous article on the Aho–Corasick algorithm, this time I summarized the BM algorithm and the WM method derived from it as information-search algorithms that support AntiVirus.